White House Moves to Block Utah AI Safety Bill

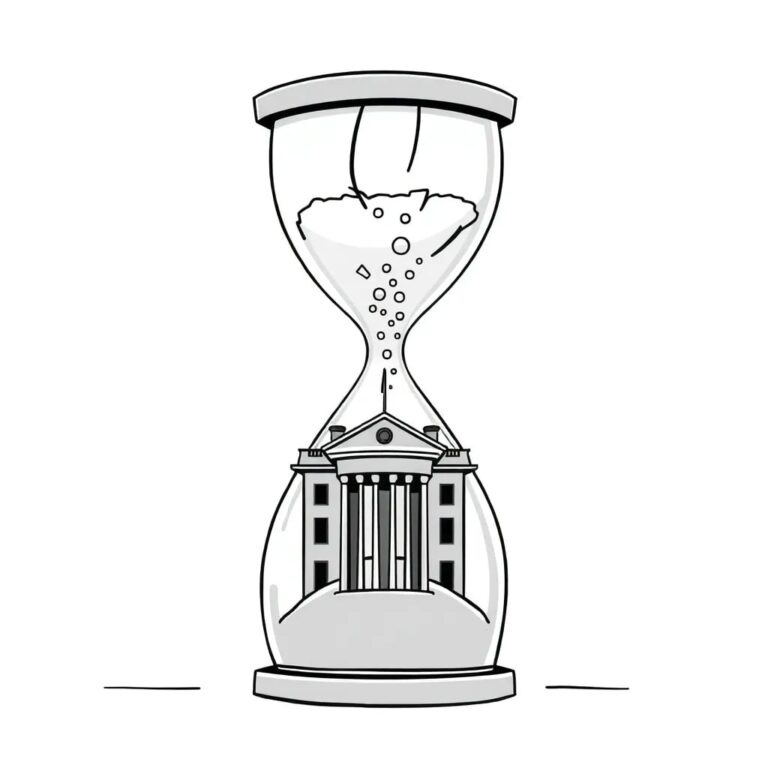

The recent decision by the White House to block Utah’s AI safety bill has ignited a heated national debate regarding the future of AI regulation. Utah’s House Bill 286, known as the Artificial Intelligence Transparency Act, was introduced by a diverse coalition of legislators and civic advocates aiming to impose significant safety and transparency obligations on developers of advanced AI systems.

Key Provisions of House Bill 286

The bill outlined several straightforward yet ambitious requirements:

- Public safety and child protection plans from AI firms

- Whistleblower protections

- Clear disclosure of measures taken to mitigate cybersecurity risks

Supporters, including both Republican lawmakers and grassroots organizations, viewed HB 286 as a vital step towards transparency in AI, aiming to provide families with essential safeguards as this technology increasingly permeates daily life.

Federal Opposition

On February 12, the White House issued a terse memorandum to Utah’s Republican leadership, labeling the bill as “unfixable” and fundamentally incompatible with the administration’s vision for AI regulation. This memorandum did not provide substantial legal justification but emphasized the necessity for a cohesive federal approach—a “One Rulebook” for AI across all states.

This federal stance is rooted in a December executive order signed by President Trump, which explicitly seeks to prevent state AI initiatives that diverge from federal standards. The order instructs the Attorney General to establish an AI Litigation Task Force aimed at challenging state laws that conflict with the federal framework. According to officials, a patchwork of varying regulations would hinder innovation, fragment markets, and impose conflicting compliance obligations on developers.

Child Safety Concerns

Federal officials previously reassured the public that measures aimed at child safety and youth protection would remain exempt from this pre-emption. However, the decision to block Utah’s bill appears to contradict these assurances, triggering significant criticism.

Broader Implications

Utah’s situation is emblematic of a larger, unresolved conflict over who should dictate the rules for the upcoming technological era. Despite numerous attempts, Congress has yet to pass comprehensive AI legislation, and efforts to ban state-level regulations within federal packages have repeatedly faltered due to bipartisan resistance.

Advocates for state action argue that Washington has been sluggish in responding to the rapid pace of AI development. They contend that states are better equipped to address pressing issues such as algorithmic harm, children’s exposure to unfiltered content, and the overarching lack of transparency surrounding powerful AI systems.

Legal Concerns

Legal experts warn that the executive branch’s reliance on regulatory authority, rather than explicit congressional approval, to override state laws raises serious constitutional questions.

However, federal officials maintain that any deviation from a unified standard could undermine national competitiveness and regulatory clarity, ultimately jeopardizing the very individuals that state laws aim to protect.

Future Implications

The outcome of this debate will have lasting consequences. If the “One Rulebook” vision prevails, it could create a more predictable landscape for AI companies, albeit at the expense of diminished state autonomy and potentially weaker consumer protections. Conversely, if states like Utah successfully assert their right to innovate and safeguard their residents, the United States might adopt a more pluralistic and adaptive approach to technology governance—albeit with challenges for businesses navigating diverse local regulations.

In conclusion, the White House’s move to block Utah’s AI safety bill highlights the ongoing struggle between state and federal regulations, with significant implications for the future of AI governance in the United States.