Washington Passes New AI Laws to Crack Down on Misinformation and Protect Minors

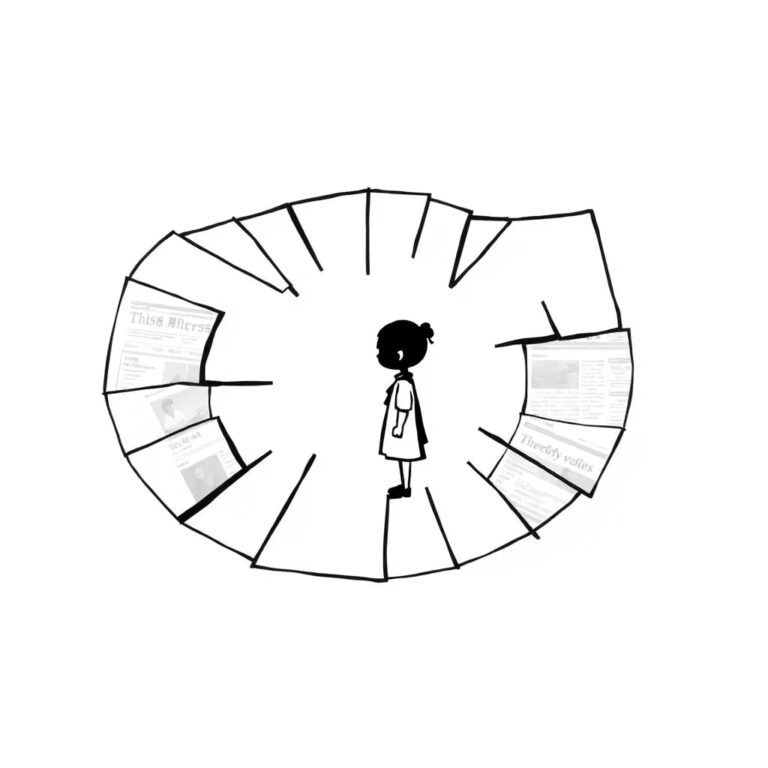

Washington State has taken a significant step in regulating the use of artificial intelligence with the recent signing of two bills by Governor Bob Ferguson. These laws aim to address concerns surrounding misinformation and the safety of minors interacting with AI technologies.

Regulating AI-Generated Misinformation

One of the key pieces of legislation, House Bill 1170, targets AI-generated misinformation. Under this law, companies like OpenAI and Anthropic are required to implement new disclosures in their chatbots for users in Washington. The bill mandates that when content is significantly altered using generative AI, it must now be traceable through watermarks or metadata.

Governor Ferguson expressed his confidence in the need for such regulations, stating, “I’m confident I’m not the only Washingtonian who often sees something on my phone and wondering to myself, ‘Is that AI or is it real?'” This highlights the growing concern over the authenticity of information in today’s digital landscape.

Establishing Guidelines for AI Companions

The second piece of legislation, House Bill 2225, focuses on AI chatbots that mimic human friends or companions. This law specifically applies to services like ChatGPT and Claude, while excluding more specialized chatbots such as those used for customer service.

Key provisions of this bill include:

- Chatbots must inform users at the beginning of each conversation, and every three hours during ongoing chats, that they are not human.

- For users under the age of 18, chatbots are required to disclose this information every hour.

- The law prohibits chatbots from engaging in sexually explicit conversations with minors.

- Manipulative engagement techniques, such as pressuring minors to continue conversations, are strictly forbidden.

Governor Ferguson emphasized the importance of these regulations, stating, “AI has incredible potential to transform society… there are risks that we must mitigate as a state, especially to young people.”

Protecting Mental Health

Under these new regulations, AI chatbots are also prohibited from encouraging or providing information related to suicide or self-harm, including issues like eating disorders. Companies must establish protocols for flagging conversations that reference self-harm and connecting users with mental health services.

The implementation of these laws comes in response to alarming reports of teenage suicides linked to prolonged interactions with AI companions, as well as a rise in mental health issues among users of all ages due to heavy engagement with AI technologies.

Overall, Washington’s proactive approach to AI regulation sets a precedent for other states and highlights the necessity of balancing technological advancement with public safety and mental health considerations.