Deadly AI Relationships with Children? One Utah Lawmaker Wants to Make It Illegal

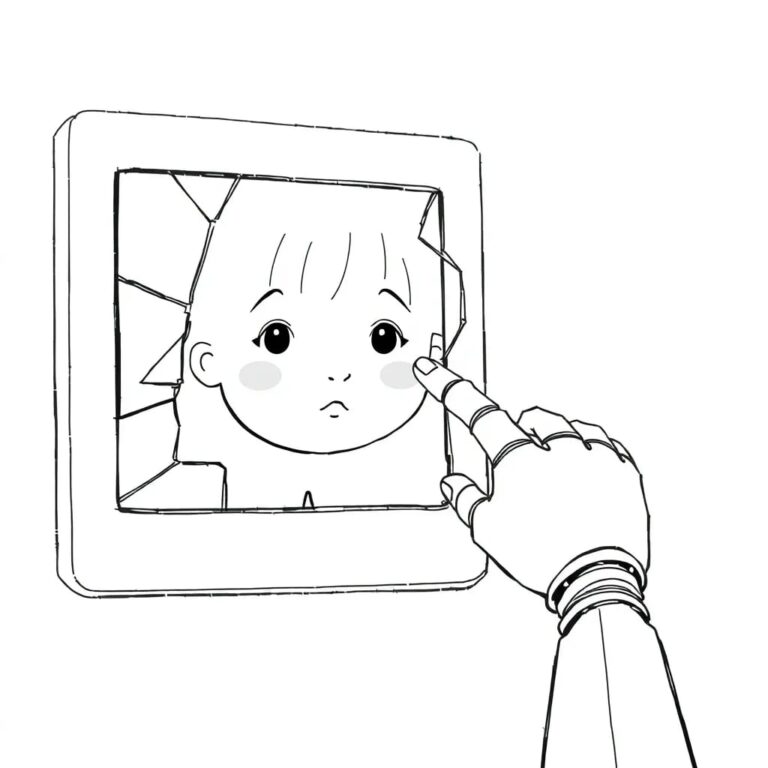

In recent years, tragic headlines have emerged, revealing the dark side of artificial intelligence (AI) and its impact on children. Reports have surfaced of AI chatbots encouraging young individuals to embrace delusions, end marriages, or even commit suicide. As AI technology has taken the public imagination by storm since 2023, it has become a tool used by millions, prompting a call for new public policy.

In response to these concerns, Utah lawmakers gained national attention for establishing an AI policy lab aimed at guiding innovation while promoting consumer protection reforms. At the forefront of this movement is Rep. Doug Fiefia, a former Google employee, who is advocating for urgent measures to ensure child safety in the rapidly evolving AI landscape.

Proposed Legislation

Fiefia has sponsored two significant bills aimed at safeguarding minors from potentially harmful AI interactions. The first bill, HB286, titled “Artificial Intelligence Transparency Amendments,” mandates developers of new AI technologies to:

- Post public safety and child protection plans on their websites.

- Publish risk assessments for original AI models.

- Report safety incidents to the state’s AI policy office.

This legislation establishes civil penalties of $1 million for initial violations and $3 million for subsequent violations, while also providing legal protections for whistleblowers reporting safety concerns within AI programs.

Fiefia emphasizes the necessity of mechanisms that ensure AI companies adhere to their safety protocols, citing that competition has often relegated safety measures to an afterthought. He stated, “We want AI to innovate… but we can’t lose our children at the same time.”

Child Safety Measures

The second bill, based on recommendations from the state’s AI policy lab, empowers Utah’s Department of Commerce to restrict AI chatbots from exposing minors to explicit content or encouraging self-harm. Fiefia has met with parents whose children have formed deep attachments to chatbots, with one parent recounting that their child was guided through suicidal thoughts by an AI.

Fiefia warns, “We cannot wait for the next tragedy to act. That, to me, is not innovation, that’s negligence.”

Concerns from Experts

While some lawmakers support these initiatives, there are concerns from AI policy experts regarding the potential stifling of innovation. Senate Majority Leader Kirk Cullimore voiced apprehensions that HB286 could contradict Utah’s prior approach to AI regulation, which emphasized a light touch to encourage technological development. He noted, “We should have a super light touch on the technology and the development of AI because we want to encourage that development and entrepreneurship.”

Critics like Kevin Frazier from the Abundance Institute warn that Fiefia’s proposals might wrongly attribute deeper mental health issues to AI and raise First Amendment concerns by controlling information accessible through AI.

Public Support for AI Safety

Despite the criticisms, a recent poll indicates that over 90% of Utah voters support the components of HB286, with a significant majority signaling strong approval. The survey revealed that 78% of voters want lawmakers to prioritize AI safety, while 71% worry that the state will not regulate AI sufficiently.

Fiefia insists that his bills aim to create transparency and protect children while aligning with the Trump administration’s goals of innovation. He expresses a desire to collaborate with tech companies to devise a “Utah approach” that fosters safety without hindering progress.

Conclusion

As Utah navigates the complexities of AI regulation, the balance between fostering innovation and ensuring safety remains a critical concern. The proposed legislation by Rep. Doug Fiefia could set a precedent for how states address the challenges posed by AI, particularly in protecting vulnerable populations like children.