A Conceptual Model to Guide AI Risk Governance Strategies

Introduction

In recent years, risk mitigation has grown increasingly salient in the AI governance landscape. Across the world, both countries and multilateral organizations have progressed from high-level statements about the risks posed by AI to adopting frameworks, laws, and policies that clarify the rights and values that AI developers and users ought to respect.

Key examples include:

- European Union Artificial Intelligence Act (Regulation (EU) 2024/1689)

- Rules from the Biden-Harris Administration governing federal agencies’ use of AI

- A unanimous United Nations General Assembly resolution on trustworthy AI (UN G.A. Res. 78/265)

These policies articulate the public interest that needs to be protected against AI risks, leading to the establishment of new AI safety institutes aimed at developing responsible design, evaluation, and use of AI.

Concerns About AI Capabilities

Policymakers and stakeholders are increasingly worried about the growing capabilities of AI models. Consequently, many emerging AI risk management actions focus on:

- Improved testing and evaluation of AI models

- Safeguards on model inputs and outputs

- Limiting access to AI model weights

Supporters of a model-centric governance approach argue that interventions during the model training and release stages can help reduce downstream risks, particularly the misuse of generative AI models. However, some critics contend that this approach is infeasible and can hinder innovation and economic competition.

The Need for a Conceptual Framework

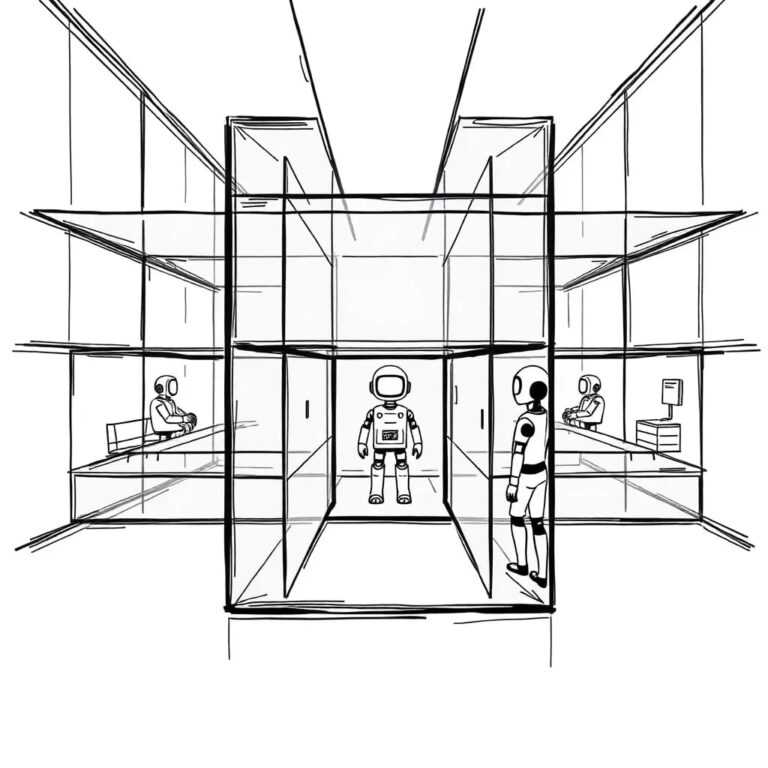

The current lack of a conceptual framework hampers AI risk management by limiting methods, tools, and expertise. This paper aims to structure the ongoing debate on AI risk management by assessing intervention points in the sociotechnical system.

AI risk management must focus on protecting public rights and safety. The paper argues for the need to recenter the prevention of harms at the sociotechnical level, acknowledging that only a comprehensive understanding of system components can effectively mitigate risks.

Key Analytic Shifts

Part I discusses various AI risk management frameworks, contrasting their focus on technical systems and highlighting their limitations. The frameworks include:

- The EU AI Act

- U.S. guidance on responsible AI use

- The UK’s AI Security Institute research agenda

- The NIST AI Risk Management Framework

Part II introduces the proposed conceptual framework, advocating for:

- A sociotechnical approach to risk management

- A preference for interventions aimed at preventing harms rather than merely reducing future hazards

Example Case Study

Part III examines a specific instance of AI risk related to image-based sexual abuse, showcasing how the proposed framework can mitigate risks effectively.

Recommendations to Policymakers

Part IV outlines four key recommendations:

- Develop a sociotechnical system map to identify relevant components related to the harm being investigated.

- Task deployers of AI systems with assessing and mitigating specific use case risks.

- Reduce reliance on developers for independent risk mitigation activities.

- Invest in the infrastructure necessary for sociotechnical evaluations and the range of risk mitigation techniques.

Limitations of Current AI Governance Frameworks

While new governance efforts aim to manage AI risks, several weaknesses persist:

- Insufficient attention to the relationality of risk

- Over-reliance on developers for risk management

- Emphasis on technocratic tools

- Model-centric mitigations that fail to address real-world harms

The article emphasizes that harms are often a product of complex interactions within sociotechnical systems rather than merely the capabilities of individual AI models. A comprehensive understanding of these interactions is crucial for effective risk management.