AI Sealed the Hood Shut: The Risks of Unserviceable Code

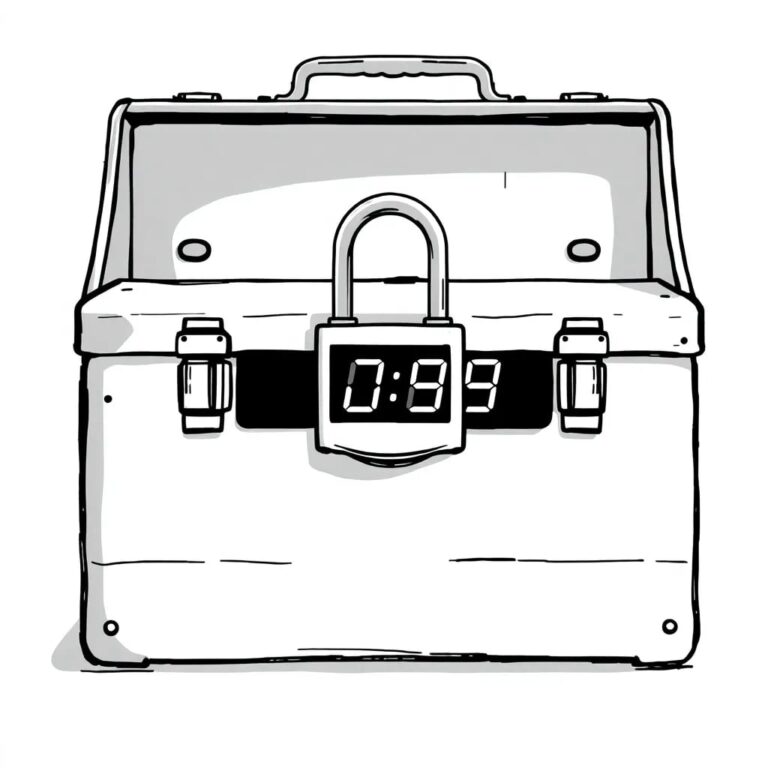

Since the arrival of the internal combustion engine, auto repair shops could fix nearly anything with basic tools. However, modernization has altered this landscape, much like how fuel-injected engines replaced traditional components like pistons and carburetors. Today, as AI coding replaces traditional coding practices, we find ourselves in a situation where fewer developers are looking “under the hood” of their web applications.

The Risks of Bad Code

One significant risk is the proliferation of bad code. Yet, the more critical concern is the loss of foundational skills necessary for servicing and maintaining code. In a world where everything operates as a complex amalgamation of software functions and libraries, the ability to manage and maintain these systems diminishes. Apps may run, but fewer individuals understand the underlying mechanisms.

As explored in Robert Pirsig’s work, “Zen and the Art of Motorcycle Maintenance”, when we lose our connection to the underlying machinery, we also lose our connection to quality. This loss of serviceability is not merely inconvenient; it introduces a new form of risk.

Evidence of the Problem

Aikido’s 2026 State of AI in Security & Development report indicates that one in five organizations has already suffered a significant incident due to AI-generated code, while nearly 70% have discovered vulnerabilities introduced by AI assistants. When flaws occur, it becomes unclear who bears the responsibility.

Responses to inquiries regarding accountability for AI-introduced breaches vary widely across engineering, security, and vendors, indicating a governance landscape that has yet to adapt to the automation era.

Impact on Early-Career Engineers

Early-career engineers often work almost exclusively at the abstraction layer. They may ship code more rapidly, but this speed comes at the expense of exposure to systems, networks, and failure modes. Such a shift weakens the human judgment needed to scrutinize AI-generated outputs before they reach production.

AI as an Amplifier of Culture

AI-generated code serves as an amplifier for an organization’s core values and security culture. In organizations with solid security DNA, AI tools enhance that culture. Conversely, in organizations lacking discipline and basic risk management, autonomous software development reflects an immature security culture.

Accountability and Consequences

Consider a proprietary trading firm that conducts an algorithm experiment. If the algorithm fails, the firm incurs financial loss, but accountability may be less contentious. Now imagine a healthcare scenario where AI assists clinicians. The stakes are significantly higher, and finger-pointing will be inevitable if a patient suffers due to a non-deterministic algorithm.

The Dangers of AI in Sensitive Domains

Examples abound of AI failures, including a coding agent that mistakenly deleted a production database. Such incidents reveal that QA and testing teams often shoulder the blame. If AI coding agents are seen as junior developers prone to errors, prioritizing speed over quality becomes a serious risk.

The Insurance Industry’s Response

The insurance industry appears ill-prepared for the implications of agentic AI. Insurers are beginning to consider coverage for negligence, intellectual property infringement, and regulatory liabilities. However, many large insurers are also seeking to exclude AI-related risks from existing policies due to the unpredictable nature of non-deterministic systems.

The Dumbing Down of Coding

Junior developers’ roles are evolving rather than disappearing; entry-level SOC analysts now monitor algorithms for malicious log events, while marketing interns create slide decks without graphic designers. This shift has led to a perception of “slop” in AI outputs, which undermines quality in creative fields.

In software development, we face a similar decline in quality. The notion that AI can replace human creativity and intentionality threatens the essence of quality coding.

Tool Sprawl and Security

Aikido’s research indicates that teams experiencing security incidents tend to use more vendor tools than those that do not. This cycle perpetuates the creation of new security problems while existing ones remain unsolved. Tool sprawl has been an inherent issue within the security industry for years.

There are two types of security professionals: certified and qualified, with limited overlap. Many certified professionals lack a fundamental understanding of how the internet operates, and the same can be said for CISOs who have yet to learn that all tools will eventually fail.

The Gap Between Policy and Practice

A SOC2 auditor evaluates the discrepancy between written policy and actual practices. Companies can face scrutiny for having perfect yet unenforceable policies or, conversely, for lacking written policies altogether. Caution is warranted when asking an LLM to draft policies, as the outcomes may be overly sophisticated compared to actual enforcement capabilities.

Governance Questions for Boards

Boards should probe: “Can you pinpoint where AI-generated code is currently running in production and who is accountable for its outcomes?” CISOs must be prepared to answer by identifying instances of AI-generated code, tracking approval, demonstrating review processes, and explaining guardrails in place to protect high-risk systems.

The Digital Potato Famine

There exists a potential scenario where a threat actor neglects to QA their malware, affecting all iOS devices on the latest OS version. This constitutes a monoculture risk, as a significant percentage of users typically update to the latest software. In contrast, Android’s numerous OEM variants provide a natural immunity.

As NIST CSF 2.0 positions governance at the forefront, these controls become ineffective if software and security expertise continue to wane. AI amplifies both positive and negative patterns, rapidly disseminating flawed logic.

Conclusion

The loss of the ability to service our own systems equates to a loss of quality. Boards must be proactive in understanding the implications of AI-generated code, ensuring proper governance is in place, and recognizing that the future of software development hinges on maintaining a balance between automation and human oversight.