AI In Compliance: Why Replacement Is The Wrong Goal

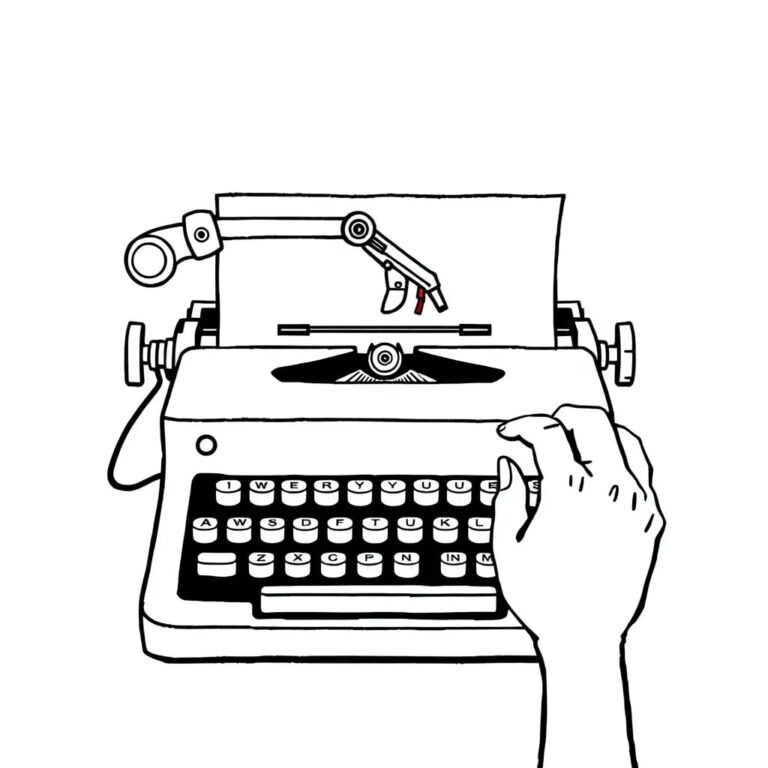

Across fintech panels and boardroom discussions, one promise keeps resurfacing — artificial intelligence will replace compliance teams. It sounds efficient. It sounds inevitable. It is also dangerously incomplete.

There is no question that AI is transforming fraud detection, anti-money laundering (AML), and risk monitoring. Global fraud losses run into hundreds of billions of dollars annually, and institutions are under pressure to detect increasingly sophisticated, networked, and real-time financial crime. Unsurprisingly, more than half of financial institutions are actively investing in AI-driven fraud and compliance capabilities. The momentum is real. The investment is real.

The Real Problem Was Never Human Judgment

For decades, compliance teams have operated under enormous operational strain. Traditional rule-based systems often generate false-positive rates as high as 30–40 percent in some institutions. Investigators spend substantial time reviewing alerts that ultimately pose no real risk. The issue was never that compliance professionals lacked capability. The issue was that systems generated too much noise.

AI promises to reduce that noise. It can:

- Identify complex patterns across millions of transactions

- Detect behavioral anomalies beyond static thresholds

- Prioritize high-risk cases intelligently

- Learn from past investigative decisions

In other words, AI can dramatically improve efficiency. But efficiency is not the same as elimination.

The Illusion of Full Automation

The idea that AI can autonomously “handle compliance” ignores a fundamental truth: compliance is not merely pattern detection. It is judgment, accountability, documentation, and regulatory interpretation. An algorithm may flag unusual behavior, but a human must determine intent, materiality, and reporting obligation. More importantly, regulators do not hold algorithms accountable; they hold institutions accountable. And institutions ultimately rely on people.

There is also a governance dimension that is often overlooked in AI enthusiasm. Financial regulators increasingly expect explainability, auditability, and traceability in automated decision-making systems. A black-box model that produces outputs without clarity may improve detection rates — but it introduces a different kind of supervisory risk. Replacing humans with opaque automation may reduce headcount, but it can increase exposure.

AI Does Not Replace Expertise — It Depends on It

Perhaps the most misunderstood aspect of AI in compliance is its dependence on data. AI models do not operate in isolation. They require:

- Structured historical datasets

- Clean and consistent data architecture

- Labelled outcomes from past investigations

- Continuous human feedback loops

A significant portion of AI program effort is spent not on model building, but on data preparation, structuring, and labeling. Who labels suspicious behavior as confirmed fraud? Who categorizes false positives? Who determines whether a transaction triggered regulatory reporting? Humans do.

AI in compliance is trained on human judgment. It refines human decisions. It scales human pattern recognition. But it does not originate institutional accountability. Without structured, context-rich data — built and maintained by experienced professionals — AI systems degrade. They drift. They misclassify. They inherit bias. They overfit to yesterday’s risk patterns.

The narrative of replacement overlooks this dependency entirely.

The Risk of Over-Automation

There is another risk that deserves attention: compliance fatigue may be replaced by overconfidence. If institutions assume AI can “handle” risk autonomously, two unintended consequences may emerge:

- Reduced human oversight

- Excessive reliance on model outputs

Neither is prudent in regulated environments. Financial crime evolves precisely because it adapts to systems. Adversaries test detection thresholds. They probe model blind spots. They exploit operational gaps. AI systems, if left unchecked, can embed outdated assumptions or amplify flawed patterns. Without continuous supervision, even sophisticated models can become fragile.

Compliance, at its core, is not about detection alone. It is about resilience — the ability to adapt responsibly when risk patterns shift. Resilience requires supervision. And supervision requires humans.

From Automation to Augmentation

The real transformation lies not in replacing professionals, but in redesigning how intelligence is applied inside compliance systems. AI should:

- Reduce false positives

- Prioritize cases intelligently

- Surface network-level insights

- Detect behavioral drift in real time

- Automate repetitive documentation

But decision authority, regulatory interpretation, and accountability must remain human. This is not a conservative stance. It is a strategic one.

When AI handles scale and behavioral complexity, professionals are freed to focus on materiality, emerging typologies, and systemic risk patterns. The outcome is not smaller compliance functions — it is smarter, more adaptive ones.

Forward-looking institutions are already moving toward this model. They are integrating fraud, AML, and transaction monitoring into unified intelligence layers. They are embedding human-in-the-loop review structures. They are designing explainable AI frameworks that regulators can audit with clarity.

The objective is not to remove human responsibility; it is to enhance it with structured intelligence.

The Strategic Shift

The conversation around AI in compliance must mature. The choice is not between humans or machines. It is about designing systems where each does what it does best. AI excels at scale, speed, and behavioral pattern recognition. Humans excel at context, interpretation, and accountability. Confusing one for the other is risky.

The future of compliance will not be defined by headcount reduction. It will be defined by intelligence design — how institutions structure data, embed governance, and build feedback loops that allow systems to evolve without compromising responsibility.

Institutions that treat AI as a substitute may gain speed. Institutions that treat it as a partner will gain resilience. The future of compliance will not belong to the fastest systems. It will belong to the systems that learn — responsibly.