Brain-Inspired AI Needs New Rules As Current Checks Simply Don’t Apply

Researchers are increasingly focused on the challenges of governing NeuroAI and neuromorphic systems, a field where current regulatory approaches fall short. This paper highlights a critical need to reassess assurance and audit methods, advocating for a co-evolution of regulation alongside brain-inspired computation to ensure technically sound and effective oversight of NeuroAI’s unique physics, learning dynamics, and efficiency.

The Inadequacy of Current AI Governance Frameworks

Existing governance frameworks, designed for static artificial neural networks running on conventional hardware, are inadequate for these fundamentally different architectures. The study demonstrates that traditional regulatory benchmarks for accuracy, latency, and energy efficiency struggle to capture the adaptive and event-driven behavior of neuromorphic and NeuroAI systems.

Understanding NeuroAI and Neuromorphic Computing

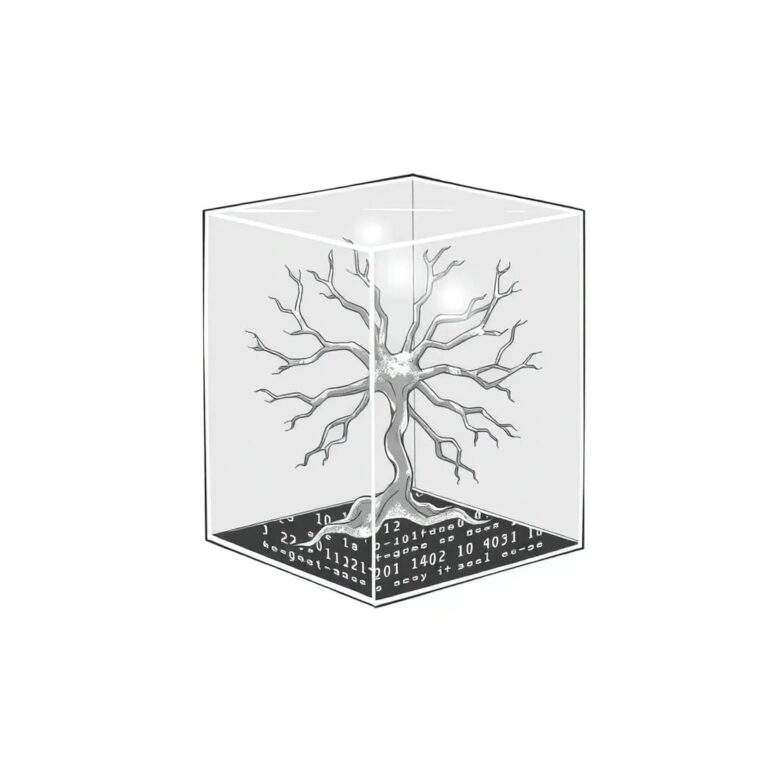

NeuroAI represents a convergence of neuroscience and artificial intelligence, aiming to create smarter, more efficient systems by leveraging insights from the brain. Neuromorphic computing departs from conventional von Neumann architecture by integrating memory and computation, operating asynchronously and in an event-driven manner.

At the algorithmic level, this manifests in spiking neural networks, which communicate via discrete spikes encoding information in rate and timing, often employing local learning rules like spike-timing-dependent plasticity. This contrasts with artificial neural networks, which rely on continuous activations and global error backpropagation.

The Need for Adaptive Governance

This research highlights the need for governance to keep pace with advancements in NeuroAI, ensuring safety and societal impact are embedded into the design of algorithms and hardware from the outset. Global efforts to regulate AI, including the EU AI Act and the U.S. NIST AI Risk Management Framework, are largely designed for static, high-compute, centrally trained models.

Proposed Frameworks and Evaluation Metrics

The study proposes a new approach, exemplified by frameworks like NeuroBench, which ties algorithmic performance to hardware efficiency, reframing evaluation from raw compute to a systems-level audit. This paper explores how metrics of efficiency, adaptability, and embodiment can be translated into regulatory language, facilitating a responsible transition of NeuroAI from laboratory prototypes to real-world applications.

Challenges in Auditing and Accountability

Auditability in NeuroAI necessitates dynamical-systems analysis, focusing on attractor landscapes, oscillatory coupling, spike synchrony, and stability margins, rather than static weight maps. The implications are particularly significant in high-stakes applications such as healthcare devices and autonomous vehicles, where safety and accountability are paramount.

Conclusion

As neuromorphic computing transitions from research labs to real-world applications, addressing governance gaps is becoming increasingly urgent. Future work must focus on developing assurance and audit methods that co-evolve with NeuroAI architectures, aligning regulatory metrics with the underlying physics and learning dynamics of brain-inspired computation.

For more information, consult the detailed study on regulating NeuroAI and neuromorphic systems.