UL Solutions Rolls Out a New Standard for AI Regulation

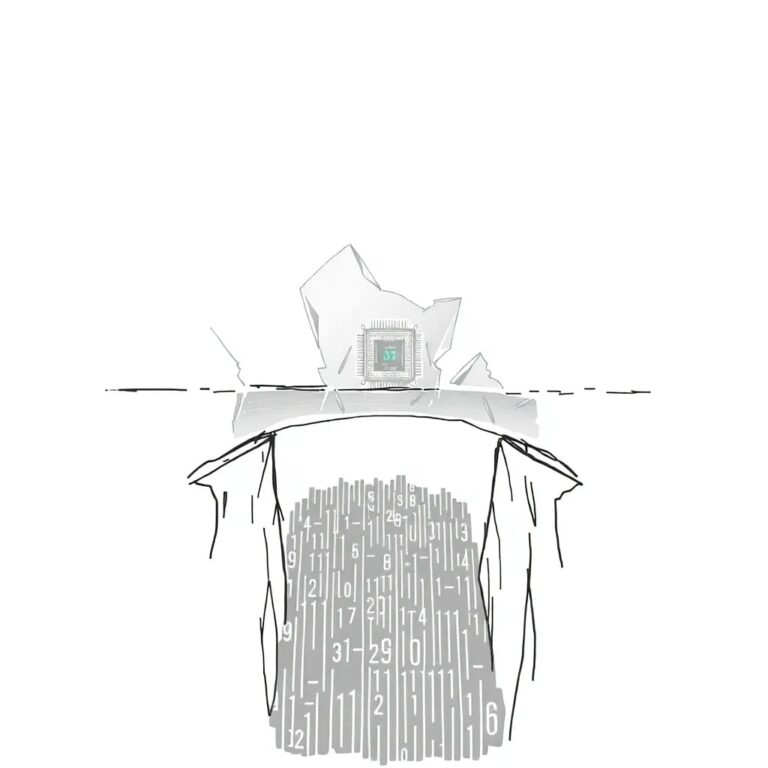

In a significant move for the tech industry, UL Solutions has introduced a new certification specifically for AI-embedded products. This initiative comes in response to the rapid evolution of technology with minimal oversight, emphasizing the mantra that “Innovation without safety is failure.”

The Need for Regulation

Historically, UL has been a trusted name in product safety for over 120 years, marking items from tree lights to toaster cords. Yet, as AI technology continues to advance, the challenge of ensuring safety in these innovations has become increasingly critical. The absence of cohesive governmental regulations and the confusion stemming from a patchwork of emerging state laws have created a pressing need for standardized safety protocols.

Introducing UL 3115

This new standard, known as UL 3115, assesses whether an AI-enabled product is safe, robust, and well-governed, ensuring that a “human in control” approach is maintained throughout the product’s lifecycle. According to UL Solutions’ CEO, Jennifer Scanlon, companies are seeking broader protections and assurances, highlighting a growing demand for standards that foster confidence in AI technologies.

Focus on Functional Safety

UL’s expertise lies in functional safety. Scanlon emphasizes that just as we expect our car’s radio to function without compromising the brakes, the same rigorous testing must apply to AI-embedded products. For instance, with AI embedded in children’s toys, the question arises: How do we ensure these toys are safe?

Certification Process

The AI Center of Excellence at UL has developed a systematic approach to apply safety protocols to AI products. This process begins with an outline of investigation, where engineers and scientists collaborate with customers to identify concerns and challenges. The evaluation includes questions about the transparency of algorithms, potential biases, the veracity of training data, and mechanisms for human oversight.

First AI-Certified Products

To date, two products have successfully received AI certification: Qcells’ Energy Management System, which serves as an AI-enabled control engine for data centers, and the Omniconn Platform 4.0, a smart building solution. These certifications represent a crucial step in bridging the gap between rapid technological advancement and necessary safety measures.

Conclusion

As the landscape of technology continues to evolve swiftly, the introduction of standards like UL 3115 demonstrates a proactive approach in ensuring that safety keeps pace with innovation. The need for private-sector safety standards to provide the necessary guardrails in this fast-moving environment has never been more evident, marking a new chapter in the quest for safe technological progress.