Governing A.I. Across Borders: Why Provenance Demands Global Cooperation

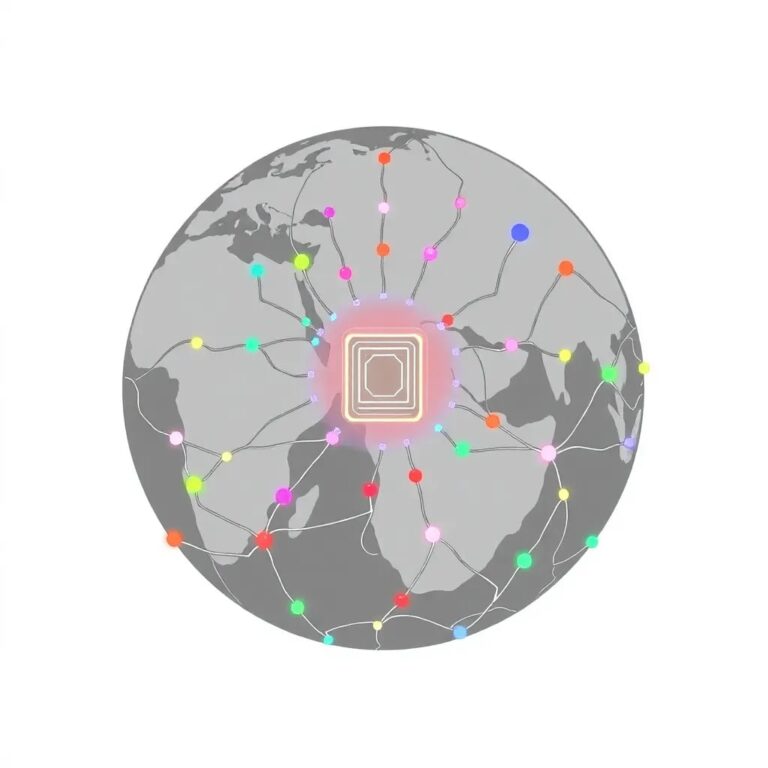

The question of A.I. provenance sits at a critical juncture of fundamental rights and emerging technology governance. Verifying the origins of both A.I. training data and generated content directly implicates constitutional protections for speech and the fight against misinformation. As synthetic content transcends national boundaries and erodes trust in democratic discourse, these challenges demand new frameworks that harmonize international standards while respecting state sovereignty.

The stakes involve not merely technical verification, but the preservation of human rights, the integrity of public law systems, and the future of digital constitutionalism in an age where algorithms increasingly mediate access to information and opportunity.

Urgent Need for International Governance Frameworks

Recent incidents highlight the need for coordinated action. For instance, when Marianna Vyshemirsky fled a bombed maternity hospital in Mariupol in 2022, Russian officials weaponized digital skepticism to dismiss photographs of her injuries as fabrications. Similarly, during the 2024 U.S. presidential campaign, A.I. systems generated false images of immigrants supposedly engaging in harmful acts, leading to social unrest.

These situations reveal how synthetic content transcends national boundaries, creating an urgent need for international governance frameworks. Recent research shows that consumers correctly identify A.I.-generated content only 50% of the time, enabling what scholars call the “liar’s dividend,” where bad actors dismiss genuine evidence by falsely claiming it was produced by A.I. systems.

Challenges of National Solutions

While national regulations like California’s A.I. Transparency Act and the European Union’s A.I. Act represent significant advancements, they also present critical challenges:

- Global Circulation: Synthetic content circulates globally, making national authentication ineffective when content crosses jurisdictional boundaries.

- Compliance Burdens: Fragmented regulations impose impossible compliance burdens on A.I. developers, particularly disadvantaging smaller companies and researchers from developing nations.

- Regulatory Arbitrage: Divergent national standards create opportunities for regulatory arbitrage, allowing the least restrictive environments to set global standards.

Leveraging Existing International Institutions

Effective international A.I. governance should leverage existing institutional frameworks rather than create new bureaucratic structures. The World Trade Organization (WTO) could harmonize technical standards, while the World Intellectual Property Organization (WIPO) could coordinate output provenance verification through a tiered certification system. UNESCO could address the cultural and educational dimensions often overlooked by national regulations.

Recent Progress and Remaining Gaps

Recent international summits have marked pivotal shifts toward actionable strategies. However, gaps remain, especially concerning input provenance challenges related to training data origins and ethical collection. The working conditions of data labeling workers in the Global South highlight these issues, as many endure significant psychological harm while contributing to A.I. system development.

Moreover, existing standards inadequately address the systematic underrepresentation of minority groups in training datasets, leading to algorithmic discrimination across various sectors.

Implementation Mechanisms and Enforcement

Translating broad principles into concrete action requires sophisticated implementation mechanisms capable of adapting to diverse national contexts. A proposed framework could establish “regulatory coherence” zones, allowing nations with similar approaches to develop deeper cooperation while maintaining compatibility.

Enforcement measures could include trade sanctions and technical measures, such as blocking non-compliant A.I. systems from accessing international networks. This dual approach provides immediate and long-term enforcement options while ensuring proportionality.

Bridging the Development Gap

To avoid creating new forms of technological inequality, effective international governance must address the distinct challenges faced by developing nations. This includes leveraging the United Nations Technology Bank for A.I. infrastructure development and establishing a multi-stakeholder A.I. capacity development network through the UN system.

Looking Forward

The rapid evolution of A.I. technology demands governance frameworks that can adapt to continuous innovation while maintaining effective oversight. Recent developments emphasize both the urgency and complexity of these challenges.

Institutional mechanisms alone cannot ensure effective provenance verification. Success ultimately depends on sustained political will, adequate resources, and a genuine commitment to addressing power asymmetries between technology-producing and technology-consuming nations.

Effective governance frameworks will determine not only the future of A.I. technology but also our ability to distinguish truth from fiction in the digital age. Establishing robust provenance requirements for A.I. content is a crucial step in maintaining social trust across borders.