The California Legislature’s Push for More Privacy and AI Regulations

California’s 2025 legislative session concluded with a clear message to businesses: privacy compliance is expanding, and artificial intelligence (AI) governance is shifting from voluntary best practices to enforceable transparency and safety obligations. On the last day of 2025, lawmakers introduced 33 privacy and AI bills, passing 16 for Governor Gavin Newsom to either sign or veto. Ultimately, the governor signed four privacy bills and seven AI bills into law, while vetoing five others. Below is a summary of the most consequential enacted measures and practical compliance takeaways for organizations operating in California.

New Privacy Laws

The California Opt Me Out Act mandates browser-based universal opt-out signals, requiring companies developing or maintaining web browsers to provide consumers with a universal opt-out preference signal applicable across all websites. While this mandate targets browser developers, businesses must prepare for more machine-readable opt-out signals, ensuring that adtech, analytics, personalization, and consent tools recognize and honor these signals consistently.

AB1043 introduces online age verification signals, shifting obligations for children’s online safety. Operating system providers must now offer an interface for users to input age-verification information during account setup, holding developers liable while excluding operating system providers and app stores from liability. App developers should treat OS-provided age signals as compliance-critical attributes.

SB 361 expands data broker oversight through broader disclosures and tougher enforcement. Data brokers must now disclose whether they collect personal information across numerous categories, including sexual orientation, citizenship status, biometric information, and government identification numbers. They must also disclose to the California Privacy Protection Agency whether they sold or shared data with foreign actors, federal or state agencies (including law enforcement), or generative AI system developers. Consequently, data brokers and companies resembling brokering should revisit their registration, reporting, and deletion workflows.

AB 45 extends prohibitions on the collection, use, disclosure, sale, sharing, or retention of personal information of individuals located at or within the precise geolocation of clinics or reproductive health care service centers. This regulation provides a private right of action for violations, prompting organizations to inventory location collection and retention practices, geofence advertising logic, and third-party tracker behavior.

AI Transparency and Safety

The Transparency in Frontier Artificial Intelligence Act requires certain frontier AI developers, with revenues of at least $500 million, to disclose safety efforts, including:

- Disclosures to the Office of Emergency Services along with mandatory third-party audits.

- Identification of integrated accepted standards within their frameworks.

- Development and publication of a clear safety framework on the developer’s website.

Developers within this scope should prepare to be audit-ready by establishing written safety frameworks, mapped standards, and disclosure processes. Enterprises procuring frontier model services should anticipate changes in procurement and due diligence as vendors align with audit and disclosure expectations.

Additionally, SB 243 regulates companion chatbots, systems designed to engage users with human-like responses, requiring:

- Clear disclosure to users that they are interacting with an AI, not a human, if a reasonable person could be misled.

- Safeguards to prevent harmful content engagement, including self-harm and suicide ideation-related outputs, particularly if the user is a known minor.

What to Expect in 2026

The second half of the California legislative session has begun with over 22 bills carried over, set against a February 20, 2026, deadline for new bills. Privacy bills currently in committee include modifications to the California Consumer Privacy Act (CCPA) and the California Invasion of Privacy Act (CIPA), along with workplace surveillance bills. Potential new obligations for data brokers and reporting for businesses collecting precise geolocation data are also anticipated.

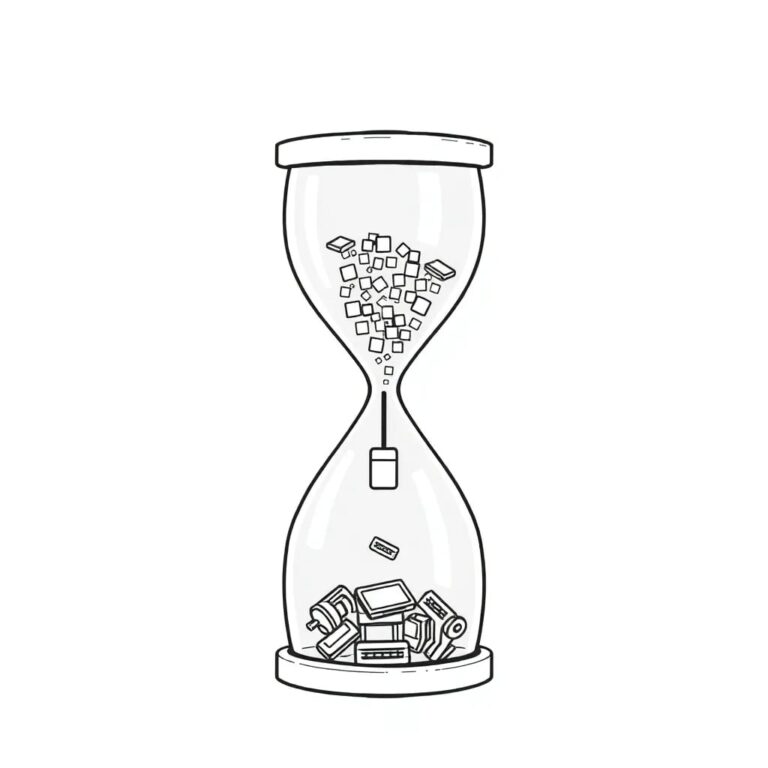

Ten AI bills await further action, addressing issues such as algorithmic pricing, AI bots, high-risk automated decision-making, copyright, and discrimination. Collectively, these enactments indicate that California is steadily transforming privacy and AI risk management into operational requirements that regulators and plaintiffs can enforce through documentation, disclosures, and demonstrable controls.

Organizations must align engineering, product, procurement, and legal teams around a comprehensive compliance roadmap that encompasses universal opt-out signal recognition, age and minor-related safeguards, data broker-style reporting and deletion workflows, sensitive geolocation and health data protections, and AI transparency and safety governance. By institutionalizing repeatable processes now—such as data mapping, vendor oversight, policy updates, testing and monitoring, and incident response playbooks—organizations can adapt to new California requirements as incremental changes rather than disruptive rework.