Anthropic’s Conflict with the Pentagon Amid Iran War Raises Ethical Concerns in AI Warfare

(OSV News) — The recent tragic incident involving the Shajarah-Tayyebeh elementary school in Minab, Iran, where 175 bodies were recovered following a U.S. Tomahawk cruise missile strike, has ignited discussions about the ethical implications of AI warfare. Preliminary reports suggest that outdated human intelligence led to the misidentification of the school as a military target during the early hours of the U.S. and Israel’s conflict with Iran.

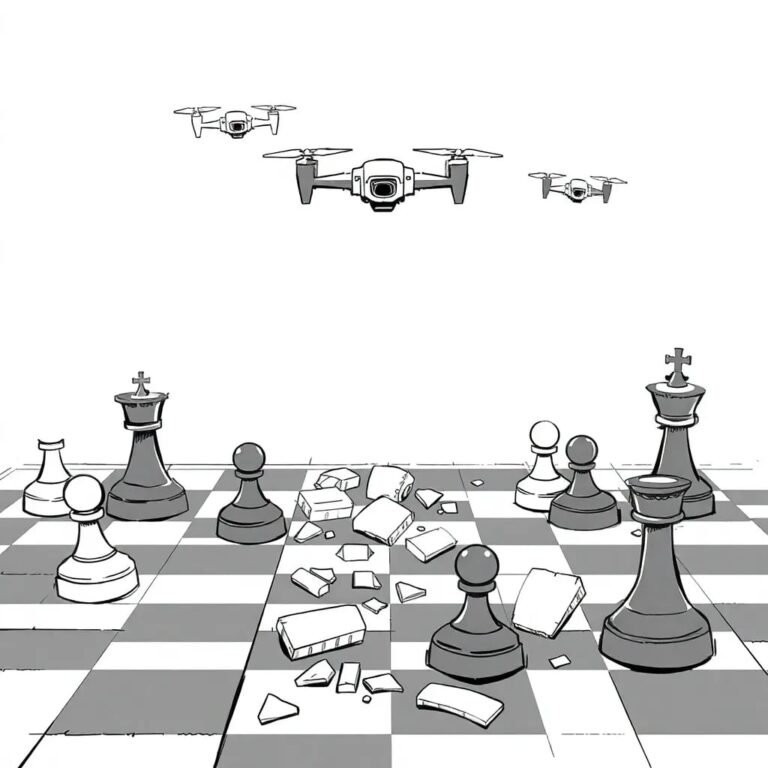

This situation has drawn attention to the complex relationship between generative artificial intelligence and military operations, particularly its use in processing data to identify and rank potential military targets for human review.

The Emergence of the ‘First AI War’

Commentators have dubbed the ongoing conflict between the United States, Israel, and Iran as “the first AI war.” This conflict raises numerous ethical considerations, primarily centered on the belief that AI should not operate autonomously.

On February 27, just before Operation Epic Fury commenced, President Donald Trump instructed government agencies to cease collaboration with tech company Anthropic due to differing views on the acceptable applications of its technology within the Department of Defense. In response, Anthropic filed a lawsuit against the Pentagon on March 9.

Concerns Raised by Anthropic’s CEO

Anthropic’s CEO, Dario Amodei, expressed concerns over the use of AI for mass domestic surveillance and fully autonomous weapons. He stated, “In a narrow set of cases, we believe AI can undermine, rather than defend, democratic values. Some uses are also simply outside the bounds of what today’s technology can safely and reliably do.”

Support from Catholic Moral Theologians

On March 13, a group of 14 Catholic moral theologians submitted a friend-of-the-court brief in support of Anthropic. They highlighted that the company’s objections to AI’s use in mass surveillance align with Catholic teachings on privacy and subsidiarity. The theologians argued that lethal autonomous weapons obscure human agency, shifting responsibility from humans to machines.

Anthony Granado, an associate general secretary at the U.S. Conference of Catholic Bishops, noted that Pope Leo XIV has described the implications of AI technology as a “digital revolution.” He emphasized that AI’s impact spans a wide range of issues vital for human life and dignity.

The Non-Neutrality of AI

Granado further elaborated that “AI is neither neutral in its implementation nor by its design.” He asserted that decisions regarding warfare must adhere to moral law and ethical considerations. The bishops have expressed deep concern over the development and use of autonomous weapons, urging that human decision-making remains essential to mitigate the horrors of warfare and the erosion of fundamental human rights.

Military Use of Advanced AI Tools

U.S. Navy Adm. Brad Cooper, head of U.S. Central Command, indicated that advanced AI tools are being utilized to conduct military strikes. “These systems help us sift through vast amounts of data in seconds, enabling our leaders to make smarter decisions faster than the enemy can react,” he stated. However, he affirmed that humans will always make final decisions regarding military actions.

Concerns from Democratic Lawmakers

On March 12, 120 Democratic lawmakers expressed concerns regarding reports that U.S. and Israeli strikes have impacted schools, hospitals, and other civilian areas in Iran. They questioned the role of AI in target selection, intelligence assessment, and legal determinations, especially concerning the tragic strike on February 28.

The Need for Ethical Oversight

Historically, the ethics of warfare have been governed by strict rules of engagement overseen by the Department of Defense. John Slattery, the executive director of the Carl G. Grefenstette Center for Ethics in Science, Technology, and Law, emphasized that these frameworks must also apply to technological systems.

However, there are growing concerns regarding the administration’s approach to warfare. Hegseth and Trump have expressed a desire to remove restrictions on AI, raising troubling questions about the moral implications of such actions.

Corporate Responsibility and Ethical Technology

In light of these developments, some business leaders are questioning the implications of government interference in corporate autonomy. Anthony Cannizzaro, a professor at The Catholic University of America, noted that while the state can influence corporate actions, removing moral checks from technology is problematic.

He referenced the Catholic principle of subsidiarity, advocating that larger institutions should not overpower smaller entities. “Anthropic has a prerogative to build its own values into that product,” he stated, emphasizing that the government should not dictate these values.

The Church’s Stance on Warfare Ethics

Msgr. Stuart Swetland, a canon lawyer and former U.S. Navy officer, affirmed that corporations like Anthropic have responsibilities to ensure their products are used ethically. He reiterated the importance of human involvement in warfare decision-making, pointing out that the criteria for just war must always be upheld.

He concluded, “Dehumanizing war will make it too easy to use deadly force, leading to disproportionate consequences that must be vigilantly guarded against.”