Why AI Companies Need Mutual Regulation

Competition among frontier AI labs creates a race to the bottom on safety. When a company invests more in safety, it deploys models later, loses customers, and risks losing the investors needed for compute. This dynamic forces labs to cut safety measures, as seen with Anthropic’s abandonment of its safety guarantee and OpenAI’s reduction of pre-deployment testing.

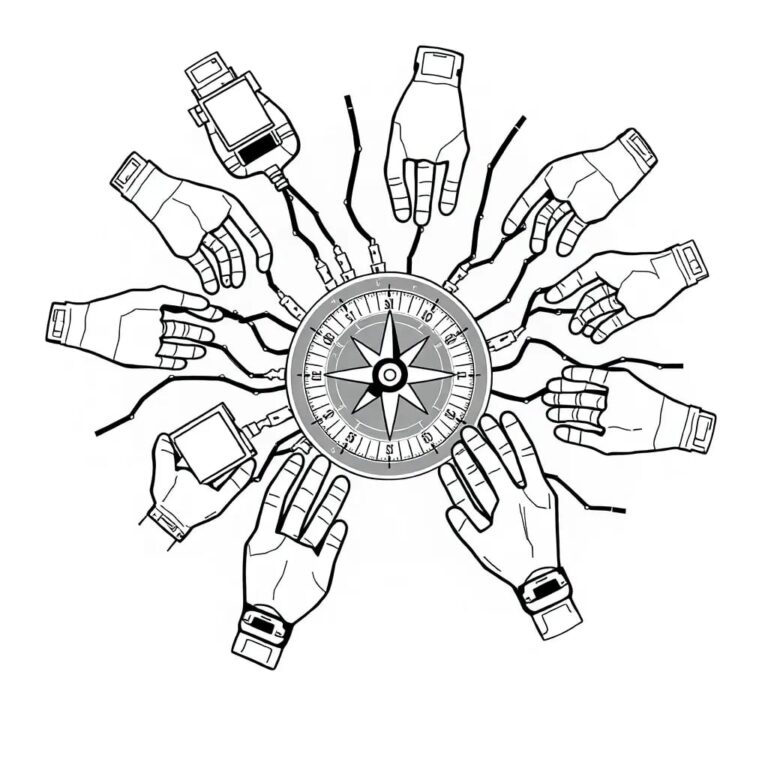

The Collective Action Problem

Four core challenges hinder effective AI regulation:

1. Competitive Pressure

Labs sacrifice safety to stay ahead, leading to a collective action problem where no single firm can unilaterally improve safety without risking market share.

2. Information Asymmetry

Critical details about training data, reinforcement learning techniques, and safety evaluations are proprietary, creating a gap between regulators and the industry.

3. The Pacing Problem

AI technology evolves faster than law. Rules designed for GPT-3 become obsolete for GPT-4, necessitating a system that can update in real time.

4. Irreversible Harm

Catastrophic risks (e.g., CBRN threats, advanced cyber-attacks) require ex-ante intervention because post-event liability is often insufficient.

Self-Regulatory Organizations (SROs) as a Solution

Financial markets have long used federally supervised Self-Regulatory Organizations (SROs) like FINRA. These bodies combine industry expertise with government oversight, offering a model that can be adapted for AI.

Key Features of an AI SRO

- Mandatory Membership: All AI labs meeting defined thresholds (compute size, revenue, R&D spend) must join.

- Statutory Authority: Empowered by legislation, the SRO can write binding rules subject to SEC-like approval.

- Funding: Fees paid by member labs fund the SRO, avoiding reliance on congressional appropriations.

- Governance: A balanced board of independent safety experts and industry representatives, overseen by a supervising agency.

How an AI SRO Would Operate

Rulemaking Process

Minor updates (e.g., benchmark adjustments) could take effect immediately, while major revisions undergo a public comment period, mirroring the dual-track system in finance.

Enforcement

The SRO would have powers to issue fines, suspend models, or bar firms from operating, with decisions appealable to the supervising agency and, ultimately, the courts.

Mitigating Capture

Robust independent board representation, whistleblower protections, and agency oversight are essential to prevent industry capture, lessons drawn from past FINRA scandals.

Illustrative Example

Suppose a lab’s model exceeds a bio-risk threshold in the Frontier Model Forum (FMF) benchmark. Under SRO rules, the lab must submit additional safety tests. Failure to comply triggers a suspension of the model and possible fines, demonstrating how the SRO enforces safety without stifling innovation.

Comparison with Other Proposals

Alternative frameworks—such as regulatory markets, private governance bodies, or California’s SB-813—share SRO-like elements but often lack mandatory participation, statutory backing, or real-time rulemaking, limiting their effectiveness.

Conclusion

Adapting the proven SRO model from finance offers a pragmatic path to address the four fundamental challenges of AI regulation: competition, information asymmetry, pacing, and irreversible harm. By establishing a supervised, mandatory, and well-funded AI SRO, policymakers can create a flexible yet accountable system that encourages safety investment while preserving the pace of innovation.