America’s AI Regulatory Patchwork: A Barrier to Innovation

The landscape of artificial intelligence (AI) regulation in the United States presents significant challenges for startups, often leading to crippling compliance costs that hinder innovation and favor established companies. The case of the autonomous driving startup PerceptIn exemplifies this issue. Initially budgeting $10,000 for regulatory compliance, PerceptIn faced actual costs exceeding $344,000 per deployment project, ultimately leading to its closure.

The Burden of Compliance

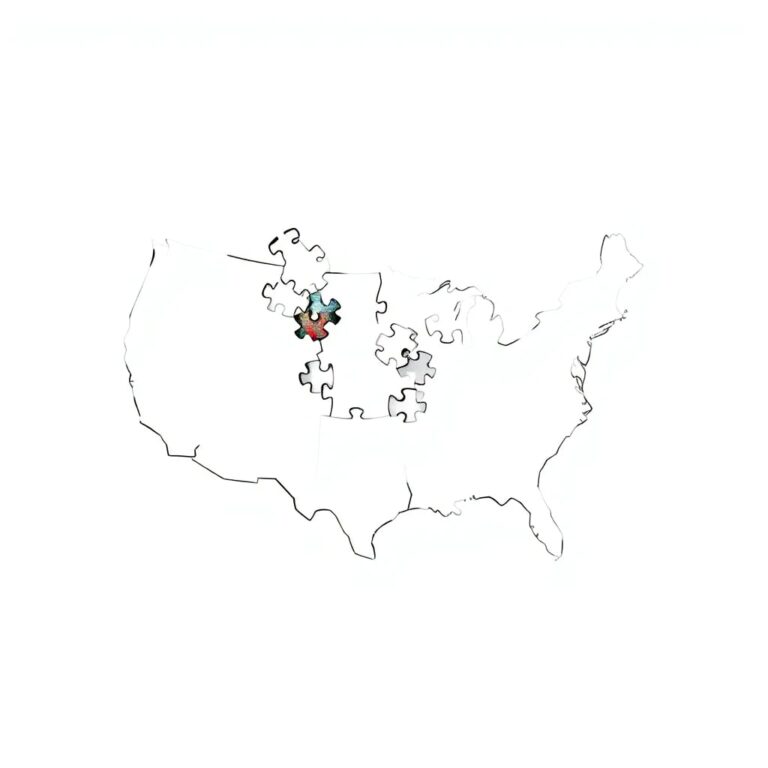

In the previous year, over 1,200 AI-related bills were introduced across various states, with at least 145 becoming law. This regulatory fragmentation results in a chaotic environment where each jurisdiction defines critical terms such as “artificial intelligence”, “high-risk systems”, and “consequential decisions” differently. Consequently, companies are forced to navigate incompatible frameworks, incurring significant compliance burdens that can add approximately 17 percent to AI system expenses.

For small businesses, California’s privacy and cybersecurity regulations alone impose nearly $16,000 in annual compliance costs. However, this figure often underrepresents the actual burden, as compliance costs scale with company size.

The Compliance Trap

Research from the Harvard Kennedy School identified a “compliance trap”, where regulatory costs outpace startups’ ability to generate revenue. A 200 percent increase in fixed compliance costs can shift a startup’s operating margin from positive 13 percent to negative 7 percent, significantly impacting survival prospects. A small team developing an employment screening tool faces the same baseline compliance obligations as a larger enterprise, but without the revenue base to absorb these costs.

Competitive Disadvantages

This regulatory dynamic provides a substantial advantage to established tech giants, allowing them to maintain compliance departments larger than entire startups. These incumbents can afford multi-jurisdictional legal teams and have the political connections necessary to influence emerging regulations. In contrast, startups find such compliance burdens insurmountable, creating barriers to entry that stifle innovation.

A Call for Federal Action

The implications of this regulatory chaos are alarming. As American entrepreneurs dedicate resources to navigating contradictory compliance regimes, Chinese AI companies benefit from a unified regulatory framework. Although China’s approach is not without challenges, it provides coherence that the U.S. patchwork lacks. When compliance costs exceed development budgets, innovation either halts or relocates to jurisdictions with clearer regulations.

In response to these competitive threats, the White House issued an executive order in December 2025, criticizing the “patchwork of 50 different regulatory regimes” and directing the U.S. Department of Justice to establish an AI Litigation Task Force. While this represents a necessary first step, legislative action is essential to create a cohesive national framework.

Proposed Solutions

Federal preemption legislation could establish uniform national standards for AI systems while allowing states to enforce general consumer protection laws. Such frameworks already exist in industries like aviation safety and pharmaceuticals, demonstrating that coherence is achievable. Safe harbor provisions could protect companies implementing reasonable bias testing and impact assessments from liability, incentivizing responsible development without conflicting mandates.

Conclusion

The current trajectory of regulatory chaos is unsustainable. Each passing month without a cohesive framework represents a loss of American innovation to better-organized competitors. Originally designed to limit Big Tech’s power, state regulations have inadvertently fortified incumbents while suffocating startups. This compliance trap does not protect consumers; it protects monopolies and enhances the competitive edge of foreign adversaries. The time for reform is now.