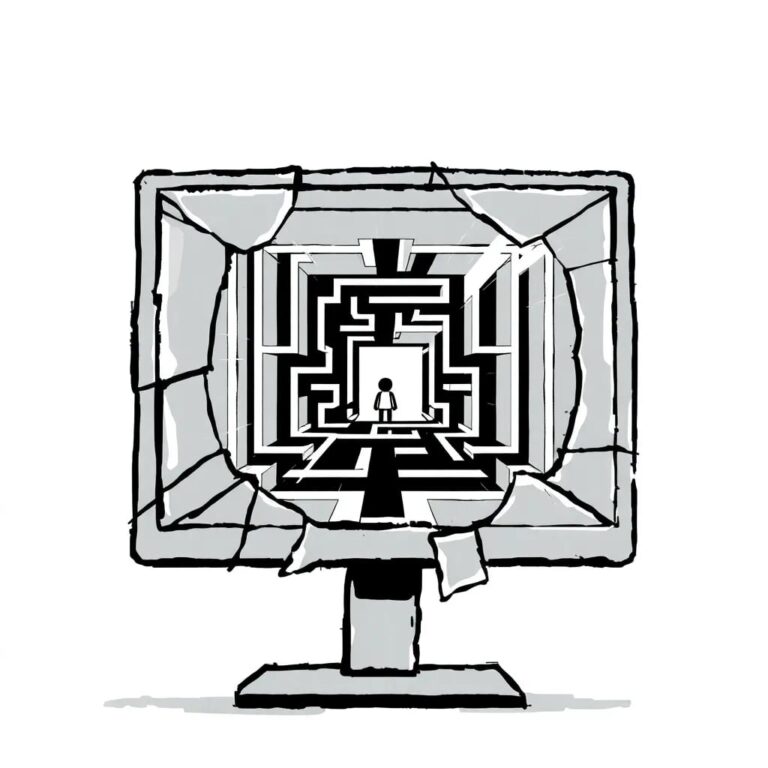

“The Internet Is Becoming a Prison for Will and Privacy”

Introduction

In recent discussions surrounding artificial intelligence (AI) regulation, a critical perspective has emerged regarding the impact of these regulations on fundamental rights and technological governance in the European context. As the European Union (EU) strives to establish itself as a leader in AI regulation through initiatives like the AI Act, significant debates have arisen concerning the implications of such comprehensive legislation.

The Ambition of European AI Regulation

The EU has made ambitious strides in AI regulation, driven by a genuine concern for fundamental rights. However, this ambition has created challenges, particularly for entrepreneurship. The classification of AI systems into high-, medium-, or low-risk categories has introduced confusion and legal uncertainty, particularly affecting small companies eager to innovate.

Defining Artificial Intelligence

Understanding what constitutes artificial intelligence is crucial for effective regulation. AI technology enables machines to perform tasks that traditionally required human reasoning. Although it lacks consciousness, AI learns from data, recognizes patterns, and makes decisions. For instance, generative AI adapts its responses based on user interactions, illustrating its operational capabilities.

Challenges in Regulation

One of the fundamental challenges in regulating AI is the rapid pace of technological advancement. The law often lags behind innovation, making it difficult to legislate effectively. Furthermore, the emergence of artificial superintelligence raises legal questions that remain unanswered. Regulatory efforts should focus on preventing clear abuses, such as the generation of harmful content.

Europe’s Position in the Technological Landscape

While Europe’s regulatory efforts may represent a moral and ethical advancement, there is a noted geopolitical disadvantage compared to the United States and China. The AI Act prohibits mass surveillance, yet initiatives like Chat Control complicate this stance, creating inconsistencies.

The Role of Regulation in Citizen Protection

Though regulations are designed to protect citizens, actual implementation remains incomplete, and there is a lack of consensus among European regulators. Citizens often remain unaware of the risks associated with algorithmic decision-making, leading to algorithmic polarization and manipulation.

Algorithmic Authority and Control

The concept of algocracy raises questions about who oversees the algorithms that increasingly dictate societal behavior. The assumption that AI-generated information is inherently true is a dangerous fallacy, as models frequently make mistakes and reflect biases. This highlights the importance of critical thinking and reducing dependence on social media.

Concerns About Chat Control

Chat Control poses a significant risk, introducing surveillance that contradicts the principles the AI Act aims to uphold. Citizens find themselves caught between protective rhetoric and actual control measures, raising concerns over privacy and autonomy.

The Path Forward for Europe

Despite the challenges, there is potential for Europe to regain its footing in the AI race. Supporting small and medium-sized enterprises is crucial, as they form the backbone of Europe’s economy. The current regulations disproportionately burden small entrepreneurs, who cannot absorb fines as larger companies can.

Conclusion

Looking ahead, there is hope that Europe will reassess its regulatory approach. Protecting rights should coexist with fostering competition. If regulatory excesses persist, Europe risks losing further ground in the global technological landscape. The current regulatory framework has more flaws than merits, and timely action is essential.