AI’s Dual Role in Cybersecurity: Insights and Regulatory Considerations

As the landscape of cybersecurity continues to evolve, the dual role of Artificial Intelligence (AI) in both enhancing security measures and presenting new threats has become increasingly significant. A recent discussion highlights how AI is reshaping the cybersecurity framework, especially in sectors such as online gambling.

The Evolving Threat Landscape

With the surge in online businesses, industries like gambling face consistent threats from cyber adversaries. The risks are comparable across sectors, including retail and banking, with the scale of threats amplifying as companies expand their global reach. The more popular a platform becomes, the larger the target it presents to potential attackers.

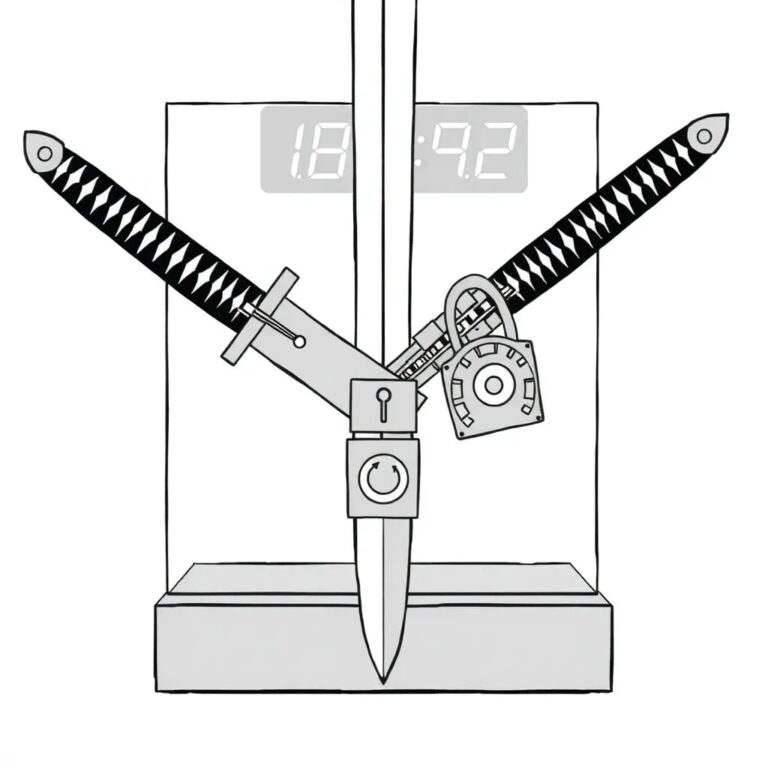

AI: A Double-Edged Sword

The rise of generative AI has altered the cybersecurity landscape significantly. Although AI and machine learning have been utilized for years, generative AI lowers the barrier for entry into malicious activities. This evolution allows individuals without advanced technical skills to create sophisticated threats, making existing vulnerabilities more prevalent and advanced.

Emergence of Vibe Coding

One notable trend is vibe coding, where non-technical users can generate functional code through simple language prompts. This shift has profound implications for security, enabling the development of malicious software, including ransomware, by individuals who might previously have lacked the necessary expertise.

AI in Cybersecurity Tools

Despite the challenges, AI also brings clear efficiencies to cybersecurity. For instance, Security Information and Event Management (SIEM) technologies benefit from AI’s ability to analyze vast volumes of security logs rapidly. This capability allows cybersecurity teams to identify relevant signals and patterns much quicker than manual analysis would permit.

Implementing AI Securely

The integration of AI tools into business operations requires a thorough understanding of the associated risk profile. Organizations must ensure that AI implementations do not compromise security while still meeting business objectives. Successful implementation hinges on strong communication and collaboration between cybersecurity teams and business units to safeguard progress without hindering innovation.

Regulatory Perspectives

The current discourse around AI regulation raises critical questions about whether the focus should be on regulating AI development or its usage. The argument posits that while the development of AI should be unregulated—similar to the early days of the internet—its application, particularly in sensitive industries like gambling, requires strict oversight to ensure compliance with existing regulations.

Challenges and Successes in Cybersecurity

The pace of technological evolution remains a significant challenge in cybersecurity. As technology advances, so do the tactics of cyber criminals. However, success in the field is measured by the ability to protect business assets effectively. The increasing caliber of professionals entering cybersecurity roles contributes to this success, fostering continual learning and improvement.

Advice for Cybersecurity Leaders

For Chief Information Security Officers (CISOs), a crucial piece of advice is to embrace curiosity by asking even the most fundamental questions. This approach can illuminate risks and challenges that may not be immediately apparent, fostering a culture of openness and proactive risk management.

In conclusion, as AI continues to shape the future of cybersecurity, stakeholders must navigate its complexities while ensuring robust security measures are in place.