AI in 2026: Financial Services Races Ahead, but Risk and Governance Must Catch Up

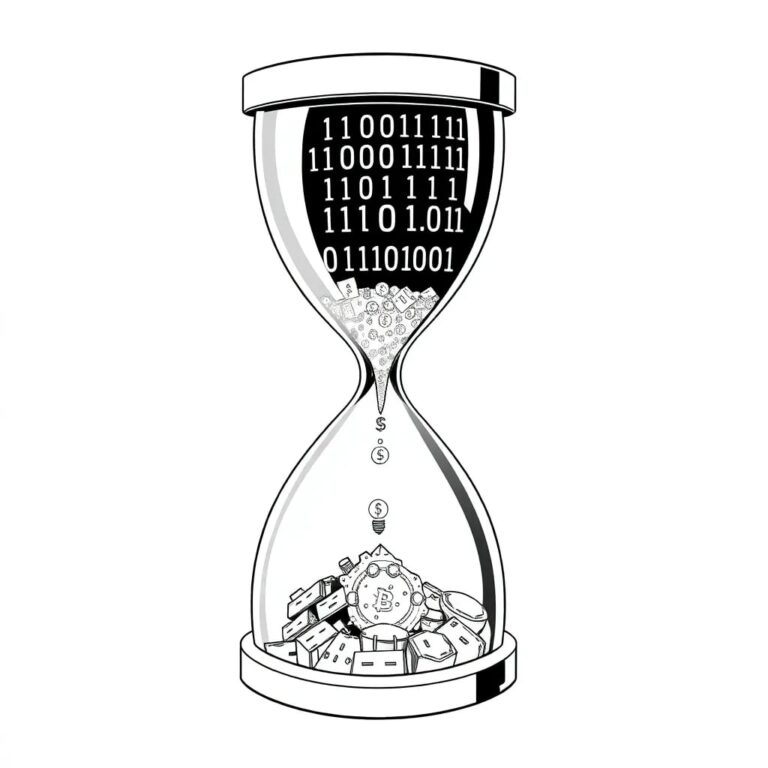

As we progress further into 2026, it is evident that Artificial Intelligence (AI) has become deeply integrated within financial services. However, the accompanying governance and oversight frameworks have not evolved at the same pace, leading to increasing operational, regulatory, and trust risks for firms and advisers.

The Current Landscape of AI in Finance

AI is no longer a distant ambition; it is now a core component of daily operations in banks, insurers, and wealth management firms. Applications of AI range from real-time fraud detection to credit decisioning, enhancing personalized client experiences. For advisers, this integration means:

- Faster onboarding

- Data-driven portfolio insights

- Digital engagement becoming the norm

The Challenge of Governance

Despite the advancements in AI, the governance surrounding it has lagged. Many firms still depend on outdated frameworks designed for static models, which are ill-equipped to handle AI’s dynamism. This gap has created vulnerabilities in:

- Operational resilience

- Regulatory readiness

- Cyber defense

If these issues remain unaddressed, they could severely undermine client trust.

Mainstreaming AI Decision-Making

The influence of AI-enabled decisions has permeated various facets of financial operations, including:

- Credit approvals

- Fraud alerts

- Risk profiling

- Client segmentation

Unlike traditional systems, modern AI models are adaptive, adjusting as new data becomes available. Legacy controls that rely on historical back tests and point-in-time validation are inadequate. Without continuous oversight, firms risk:

- Performance drift

- Hidden biases that affect outcomes

- Difficulty in explaining decisions to clients and regulators

Enhancing Model Risk Management

While traditional model risk management remains important, it requires enhancement to accommodate AI. Firms should implement:

- Continuous monitoring

- Lifecycle governance

- Rigorous documentation of data lineage, training, testing, and updates

This focus on governance is not solely for compliance; it is vital for maintaining resilience. Unmonitored models can degrade over time, potentially leading to issues that only become apparent to clients or regulators after significant damage.

A Broader Cyber Threat Landscape

The integration of AI also expands both the attack surface and the attack vectors. Emerging threats include:

- Data poisoning

- Model inversion

- Model stealing

- Prompt injection attacks

To effectively protect against these sophisticated threats, firms must combine cybersecurity discipline with an understanding of AI’s learning and behavioral patterns, especially when client data and trust are at stake.

Board Accountability and Ethical Oversight

The responsibility for AI governance is increasingly falling on boards and senior leadership, alongside cybersecurity and financial controls. This includes ensuring that staff are trained not only to utilize AI tools but also to comprehend the associated regulatory, reputational, financial, ethical, and operational risks and how to manage them. For advisers, this emphasis on ethical AI aligns with their professional duties, fostering confidence in the advice process.

Regulation and Compliance

Regulatory expectations are tightening, with regulations such as the EU AI Act pushing firms to embed responsible AI principles from the outset. This includes:

- Risk tiering

- Documentation

- Transparency

- Post-market monitoring

Even if advisers are not directly impacted, they will feel the effects through platform providers and data partners. In 2026, compliance is not merely about avoiding penalties; it is about demonstrating trustworthiness in a digital advice ecosystem.

Governance as a Competitive Advantage

The differentiating factor in 2026 will be not just the extent of AI deployment but the intelligence of its governance. Firms that invest in robust oversight, transparency, and ethical frameworks will innovate confidently, protect clients, and respond credibly to regulatory scrutiny. For independent financial advisers (IFAs) and their clients, effective AI governance is fundamental to trust, resilience, and the credibility of financial advice in an increasingly automated world.