U.S.-Iran Conflict Spotlights AI Ethics and Market Risks

The ongoing conflict involving the U.S., Israel, and Iran has brought significant attention to the ethical implications of artificial intelligence (AI) and the associated market risks. Central to this discourse is the recent lawsuit between the U.S. Department of Defense and the AI company Anthropic, which has financial ties to Microsoft.

Microsoft’s Position

Microsoft has publicly supported Anthropic in the lawsuit, as the tech giant holds a stake in the company. The collaboration between Microsoft and Anthropic means that any restrictions placed on Anthropic’s operations could adversely affect Microsoft’s business interests.

Expert Insights

On March 17, Kim Hak-joo, a professor at Handong University’s AI Convergence Department, shared insights on the economic implications of the conflict during a feature on the Chosun Ilbo’s YouTube channel, Chosun Ilbo Money. He argued that the war will fundamentally shape the scope of AI utilization, control, and ethical considerations.

The Lawsuit’s Background

The lawsuit stems from the Department of Defense’s attempts to use Anthropic’s AI model, ‘Claude’, for military purposes without restrictions. Anthropic intervened, leading to legal action due to ethical concerns about AI’s role in warfare.

Ethical Dilemmas in AI

Anthropic, founded in 2021 by Dario Amodei, previously a vice president at OpenAI, emphasizes ethical constraints in AI development. Amodei left OpenAI citing concerns over the ethical trajectory of AI technologies. This ethical divide is stark when juxtaposed with the Department of Defense’s directive to “win by any means necessary” in wartime scenarios.

Historical Context

The relationship between tech companies and military applications is not new. Google has historically engaged with the Department of Defense on various projects, including quantum computing. However, past instances of unethical military use of AI have drawn criticism, exemplified by the resignation of Geoffrey Hinton when his AI technologies were employed for lethal purposes.

Potential Consequences

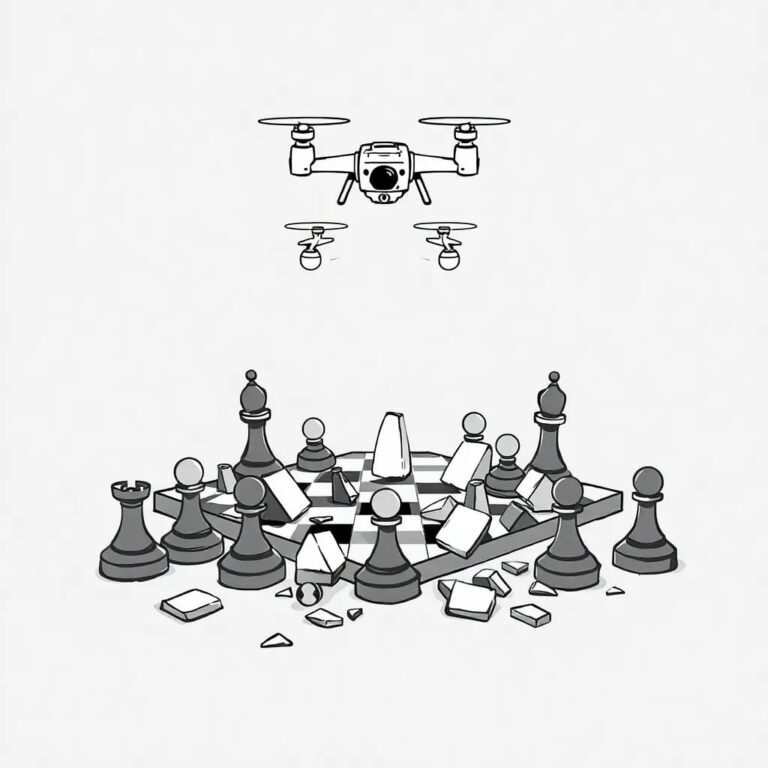

There is a looming concern that unrestrained AI could escalate global conflict. The use of autonomous drones in the Russia-Ukraine war has already demonstrated the potential for significant destructive capabilities. The risk of AI being used to execute military operations without ethical oversight poses an existential threat, potentially leading to catastrophic outcomes.

Geopolitical Implications

The U.S.’s strategic interests in the Middle East, particularly regarding oil trade, are critical. By targeting Iran and Venezuela—key players in oil markets—the U.S. aims to disrupt China’s energy security. Such moves risk escalating tensions and altering the global balance of power.

China’s Response

In response to potential disruptions in oil supply, China may accelerate its development of small nuclear reactors, reflecting a shift in strategy to ensure energy security. This geopolitical chess game underscores the importance of monitoring developments in military and energy sectors.

Economic Considerations

The article raises alarms about a potential collapse of the AI bubble due to rising oil prices, drawing parallels to the 2011 Arab Spring when oil prices surged without triggering inflation. The current economic environment, however, lacks the same low-cost labor dynamics that previously mitigated inflationary pressures, increasing the risk of stagflation.

Despite these challenges, AI development is unlikely to halt. Data centers are proliferating, and AI continues to evolve from learning to reasoning capabilities, indicating a persistent trajectory of advancement.

Investors and stakeholders must remain vigilant as these dynamics unfold, as the interplay between AI ethics, military applications, and global economics could redefine the landscape of both technology and international relations.