AI Act: The Great Divider of AI Practice?

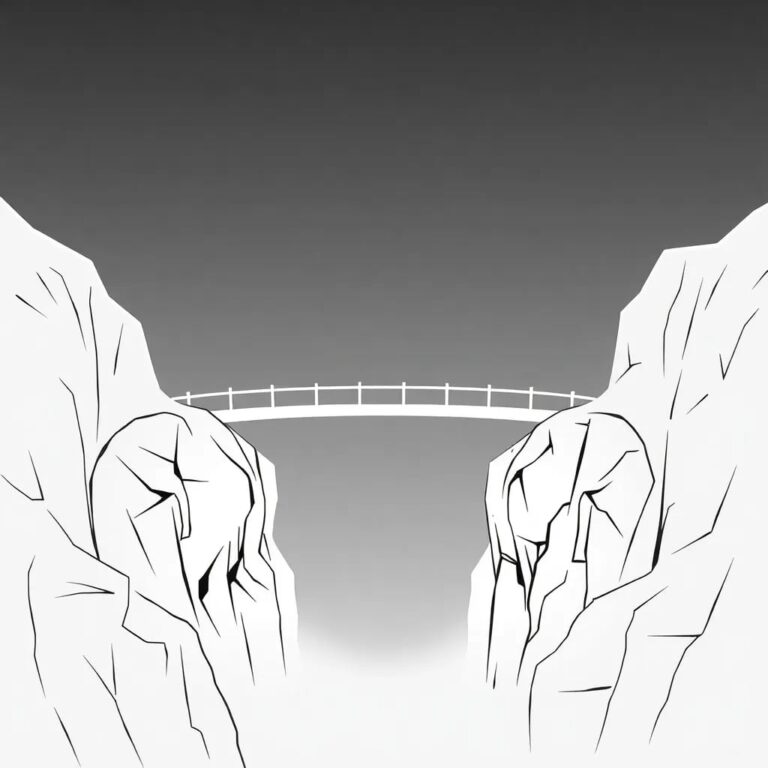

Until now, the maturity of organizations for creating AI applications existed on a continuum. The proposed AI Act is set to establish a maturity threshold, dividing organizations into two groups: those that can comply with regulations and continue to develop AI systems themselves, and those that cannot.

What is the Artificial Intelligence Act?

In recent years, Artificial Intelligence (AI) has seen colossal investments, reaching $500 billion worldwide in 2023. This surge has led to significant advancements, including the development of foundation models like ChatGPT. The potential contribution of AI to the global economy is estimated to reach $15.7 trillion by 2030. The increasing use of AI across sectors like healthcare, financial services, and retail has highlighted the need for controlling potential risks and abuses, prompting the development of AI-specific legislative frameworks. The European Union aims to take the lead with its Artificial Intelligence Act.

The AI Act defines AI systems as “software that can generate results that influence its environment, created by machine learning, logic, and knowledge-based or statistical approaches.” This broad definition necessitates categorizing AI systems according to their risk level and applying differentiated requirements, particularly as many impactful systems will be classified as ‘high risk’.

Pitfalls Organizations Might Struggle to Avoid

While the final regulations are yet to be established, the intentions of the legislators are becoming clear. This analysis connects those intentions regarding high-risk AI systems with common struggles faced by AI teams in practice.

Lack of Data Governance

Once the new European regulation is enacted, organizations must define proper data governance principles for datasets used in AI development. This encompasses dataset design, collection, preparation (cleaning, enrichment, labeling), and quality, particularly regarding potential bias. Many companies have not fully organized themselves around these principles, leading to informal cultures that may persist, resulting in non-compliance with AI Act intentions.

Insufficient Testing

The regulation emphasizes the need for appropriate testing procedures, including train-test splitting, formalized metrics, and probabilistic thresholds to ensure compliance with best practices. However, creating sufficient train-test splits proves challenging, even for experienced data scientists, often leading to inadequate benchmarking against relevant metrics.

Insufficient Risk Management

The proposal requires organizations to establish, implement, and maintain a risk management system that involves the iterative identification and analysis of known and foreseeable risks. This may be problematic for organizations new to AI, as effective risk frameworks require maturity, and immature frameworks can stifle innovation.

Insufficient Post-Market Monitoring

Beyond AI system creation, providers must implement a post-market monitoring system to assess continuous compliance with regulations. This involves collecting and analyzing information from users throughout the AI system’s lifecycle, addressing long-term risks. Many companies currently fail to adequately monitor value or risks associated with their AI solutions.

Insufficient Technical Documentation

Producers of AI systems are obligated to provide transparency by maintaining scrupulous technical documentation. This includes system descriptions, interactions with external software/hardware, development processes, risks, and compliance systems. Many organizations lack the necessary maturity to fulfill these documentation standards.

Impact on the AI Development Landscape

The common deficiencies in current practices, combined with the forthcoming requirements of the AI Act, present significant challenges. The EU’s approach, reminiscent of the GDPR, aims to create a forward-looking regulation that allows room for innovation. However, it also clearly defines a playing field that demands maturity and risk awareness from organizations.

As a result, organizations may face a division: those mature enough to develop high-risk AI systems and those that will struggle to do so. Early-stage companies, often lacking governance emphasis, may find their experimentation limited or forced to tackle broader organizational developments.

In conclusion, while the AI Act aims to efficiently address important risks, the potential for severe fines—up to €30 million or 6% of annual turnover—could lead to a stark division in the AI landscape.